A few servers

- Addition server (code)

- Provides

add/3andstop/1operations

- Provides

-module(addition_server).

-export([start/0, add/3, stop/1]).

start() ->

spawn(fun loop/0).

loop() ->

receive

{add, From, Ref, X, Y} ->

From ! {add_reply, Ref, X + Y},

loop();

stop -> ok

end.

add(Server, A, B) ->

Ref = make_ref(),

Server!{add, self(), Ref, A, B},

receive

{add_reply, Ref, Res} -> Res

end.

stop(Server) -> Server!stop.

- Counter server (code)

- Provides

incr/1,read/1andstop/1operations

- Provides

-module(counter_server).

-export([start/0, incr/1, read/1, stop/1]).

start() ->

spawn(fun () -> loop(0) end).

loop(N) ->

receive

{incr, From, Ref} ->

From ! {incr_reply, Ref},

loop(N + 1);

{read, From, Ref} ->

From ! {read_reply, Ref, N},

loop(N);

stop -> ok

end.

incr(Server) ->

Ref = make_ref(),

Server!{incr, self(), Ref},

receive

{incr_reply, Ref} -> ok

end.

read(Server) ->

Ref = make_ref(),

Server!{read, self(), Ref},

receive

{read_reply, Ref, X} -> X

end.

stop(Server) -> Server!stop.

- Terminal print server (code)

- Provides

print/1,printed/1andstop/1operations

- Provides

-module(tprint_server).

-export([start/0, print/2, printed/1, stop/1]).

start() ->

spawn(fun () -> loop(0) end).

loop(N) ->

receive

{print, From, Ref, ToPrint} ->

io:format("~p~n", [ToPrint]),

From ! {print_reply, Ref},

loop(N + 1);

{printed, From, Ref} ->

From ! {printed_reply, Ref, N},

loop(N);

stop -> ok

end.

print(Server, ToPrint) ->

Ref = make_ref(),

Server!{print, self(), Ref, ToPrint},

receive

{print_reply, Ref} -> ok

end.

printed(Server) ->

Ref = make_ref(),

Server!{printed, self(), Ref},

receive

{printed_reply, Ref, X} -> X

end.

stop(Server) -> Server!stop.

A generic server

The servers above follow the same pattern, and most of the code is boilerplate.

Instead of repeating the code, we will implement a generic parametrised server.

Note: Erlang has a standard component called

genserver. What is presented here is a simplified version of genserver, which is similar in spirit to the standardgenserver, but quite different in the details. The version ofgenserverpresented is here is used in lab 3.

start(State, F) ->

spawn(fun() -> loop(State, F) end).

loop(State, F) ->

receive

{request, From, Ref, Data} ->

case F(State, Data) of

{reply, R, NewState} ->

From!{result, Ref, R},

loop(NewState, F)

end;

stop -> ok

end.

request(Pid, Data) ->

Ref = make_ref(),

Pid!{request, self(), Ref, Data},

receive

{result, Ref, Result} ->

Result;

end.

stop(Pid) ->

Pid ! stop,

ok.

Using genserver

The code for a generic server takes care of the communication, faults, and upgrades

Programmers then only focus on the functionality

- No communication primitives

The addition server (code)

start() -> genserver:start(none, fun handle/2). handle(none, {add, X, Y}) -> {reply, {add_reply, X + Y}, none}. add(Server, A, B) -> {add_reply, C} = genserver:request(Server, {add, A, B}), C. stop(Server) -> genserver:stop(Server).1> S = addition_genserver:start(). <0.45.0> 2> addition_genserver:add(S, 2, 3). 5

The counter server (code)

start() -> genserver:start(0, fun handle/2). handle(N, incr) -> {reply, incr_reply, N+1}; handle(N, read) -> {reply, {read_reply, N}, N}. incr(Server) -> genserver:request(Server, incr), ok. read(Server) -> {read_reply, M} = genserver:request(Server, read), M. stop(Server) -> genserver:stop(Server).The terminal print server (code)

start() -> genserver:start(0, fun handle/2). handle(N, {print, ToPrint}) -> io:format("~p~n", [ToPrint]), {reply, print_reply, N+1}; handle(N, printed) -> {reply, {printed_reply, N}, N}. print(Server, ToPrint) -> genserver:request(Server, {print, ToPrint}), ok. printed(Server) -> {printed_reply, M} = genserver:request(Server, printed), M. stop(Server) -> genserver:stop(Server).

Robust generic server

- We will add two features to the generic server (code)

- Handling errors when the server code fails

- Support for upgrading the code of a running server

loop(State, F) ->

receive

{request, From, Ref, Data} ->

case catch(F(State, Data)) of

{'EXIT', Reason} ->

From!{exit, Ref, Reason},

loop(State, F);

{reply, R, NewState} ->

From!{result, Ref, R},

loop(NewState, F)

end;

{update, From, Ref, NewF} ->

From ! {ok, Ref},

loop(State, NewF);

stop -> ok

end.

request(Pid, Data) ->

Ref = make_ref(),

Pid!{request, self(), Ref, Data},

receive

{result, Ref, Result} ->

Result;

{exit, Ref, Reason} ->

error(Reason)

end.

update(Pid, Fun) ->

Ref = make_ref(),

Pid!{update, self(), Ref, Fun},

receive

{ok, Ref} -> ok

end.

Concurrency patterns revisited

Message passing

Barrier synchronisation

Resource allocation

Readers and writers

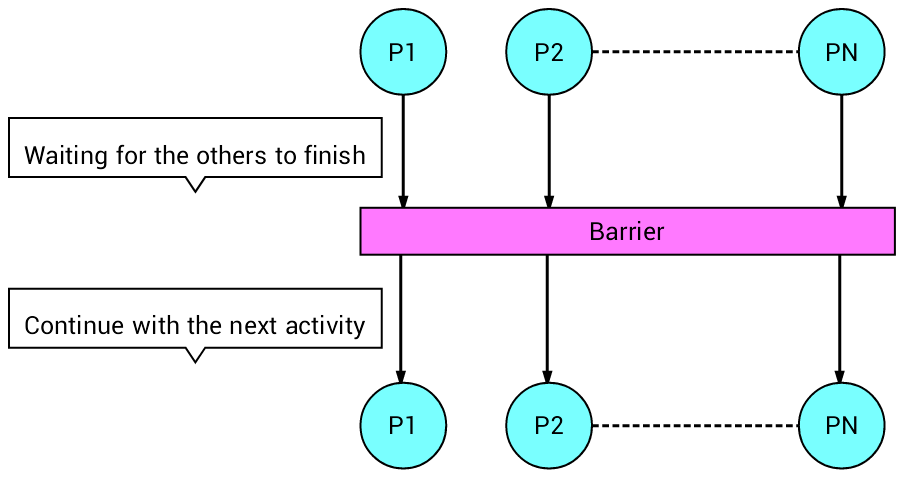

Barrier synchronisation revisited

N processes must wait for the slowest before continuing with the next activity

Widely used in parallel programming

reach_wait(Server) ->

Ref = make_ref(),

Server ! {reach, self(), Ref},

receive

{ack, Ref} -> true

end.

start(N) ->

Pid = spawn(fun() -> coordinator(N,N,[]) end),

register(coordinator, Pid).

coordinator(N,0,Ps) ->

[ From ! {ack, Ref} || {From, Ref} <- Ps ],

coordinator(N,N,[]) ;

coordinator(N,M,Ps) ->

receive

{reach, From, Ref} ->

coordinator(N,M-1, [ {From,Ref} | Ps])

end.

- Compared with the semaphore solution, there is no problem regarding one fast processes stealing signals

Resource allocation revisited

A controller controls access to copies of some resources (of the same kind)

Clients requiring multiple resources should not ask for resources one at a time

- Why would this be bad?

Clients make requests to take or return any number of the resources

A request should only succeed if there are sufficiently many resources available (see line

4)Otherwise the request must block

Function

lists:sublistreturns a slice of a

list (more here)

loop(Resources) ->

Available = length(Resources),

receive

{req, From, Ref, Number} when Number =< Available ->

From ! {res, Ref, lists:sublist(Resources, Number)},

loop(lists:sublist(Resources, Number+1, Available)) ;

{ret, List} -> loop(lists:append(Resources, List))

end.

start(Init) ->

Pid = spawn (fun () -> loop(Init) end),

register(rserver, Pid).

request(N) ->

Ref = make_ref(),

rserver ! {req, self(), Ref, N},

receive

{res, Ref, List} -> List

end.

release(List) ->

rserver ! {ret, List},

ok

- Example

> c(ralloc).

{ok,ralloc}

> ralloc:start([1,1,1,1]).

true

> ralloc:request(3).

[1,1,1]

> ralloc:release([1]).

ok

> ralloc:request(2).

[1,1]

> ralloc:request(10).

- In the last line, the process blocks

Readers and writers revisited

Two kinds of processes share access to a “database”

Readers examine the contents

- Multiple readers allowed concurrently

Writers examine and modify data

- A writer must have mutex

Readers and writers in a few lines

loop(Rs, Ws) ->

receive

{start_read, From, Ref} when Ws =:= 0 ->

From ! {ok_to_read, Ref},

loop(Rs+1,Ws) ;

{start_write, From, Ref} when Ws =:= 0 and Rs =:= 0 ->

From ! {ok_to_write, Ref},

loop(Rs, Ws+1) ;

end_read -> loop(Rs-1, Ws) ;

end_write -> loop(Rs, Ws-1)

end.

- Is it a fair solution?

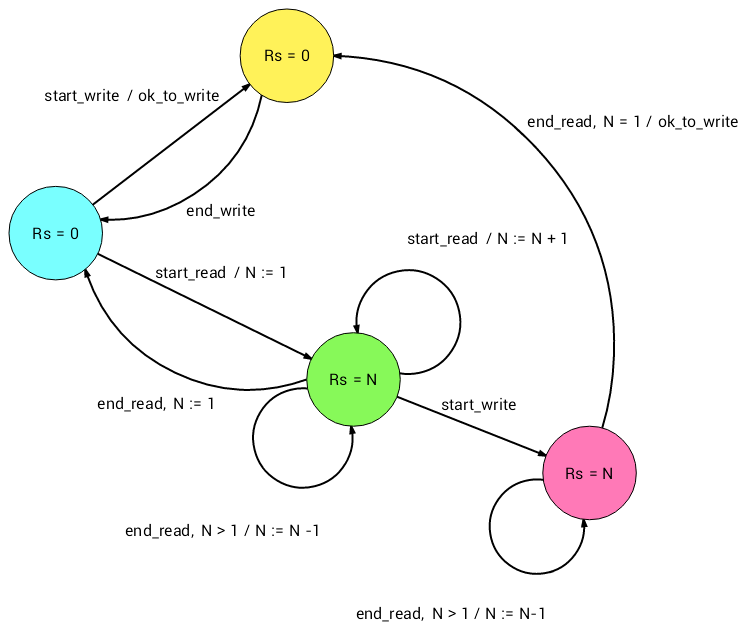

Fair readers and writers

- State diagram

- The format of the events are

<received event>, <condition> / <triggered event>

loop() ->

receive

{start_read, From, Ref} ->

From ! {ok_to_read, Ref},

loop_read(1),

loop() ;

{start_write, From, Ref} ->

From ! {ok_to_write, Ref},

receive

end_write -> loop()

end

end.

loop_read(0) -> ok ;

loop_read(Rs) ->

receive

{start_read, From, Ref} ->

From ! {ok_to_read, Ref},

loop_read(Rs+1) ;

end_read -> loop_read(Rs-1) ;

{start_write, From, Ref} ->

[ receive end_read -> ok end

|| _ <- lists:seq(1,Rs) ],

From ! {ok_to_write, Ref},

receive

end_write -> ok

end

end.

At top-level function

looprelies on the fairness property of Erlang (i.e. the oldest message that matches any guard is processed)Function

loop_readimplements fairnessLine

[ receive end_read -> ok end || _ <- lists:seq(1,Rs) ]performs as manyreceiveas the numberRs